Your Convenience Is Their Business Model

The consumer surveillance infrastructure being built around you isn’t a side effect of the tech industry: it’s the product built with your data. And it’s never been more visible… or more convenient to ignore.

By Don Lupo

During Super Bowl LX, Amazon ran an ad for their new Ring Search Party feature which featured a lost dog, a worried family, and neighbors working together to create a happy family reunion. The public backlash against the ad was justified. (And that VO: ugh.)

The ad showed Ring doorbell cameras scanning the block, AI matching fur patterns, and a clear example of the mass surveillance infrastructure being marketed as a feature. Don’t you want to help lost dogs?

Ring Search party works by scanning footage across an entire neighborhood’s network of Ring devices, using AI analysis to identify matching subjects across multiple private cameras.

The company also offers a separate feature called Familiar Faces, which runs facial recognition on anyone who appears in view of your doorbell camera and matches them against a saved list or biometric data. (Not ominous at all.) The Electronic Frontier Foundation (EFF) noted the obvious implication in its analysis of the ad: the technology that finds a dog today can also track you down tomorrow, or anyone it deems “undesirable”.

Ring’s history is dubious at best. Amazon is already giving this data to law enforcement freely and willingly. (The company also gave employees extensive access to customer footage without adequate controls.) Amazon courted police departments with free hardware giveaways as far back as 2016, provided warrantless law enforcement access to footage for years, and after claiming to end that practice, established formal partnerships with Axon and Flock Safety that allow law enforcement to again request footage directly from users. The feature that lets them do so is turned on by default.

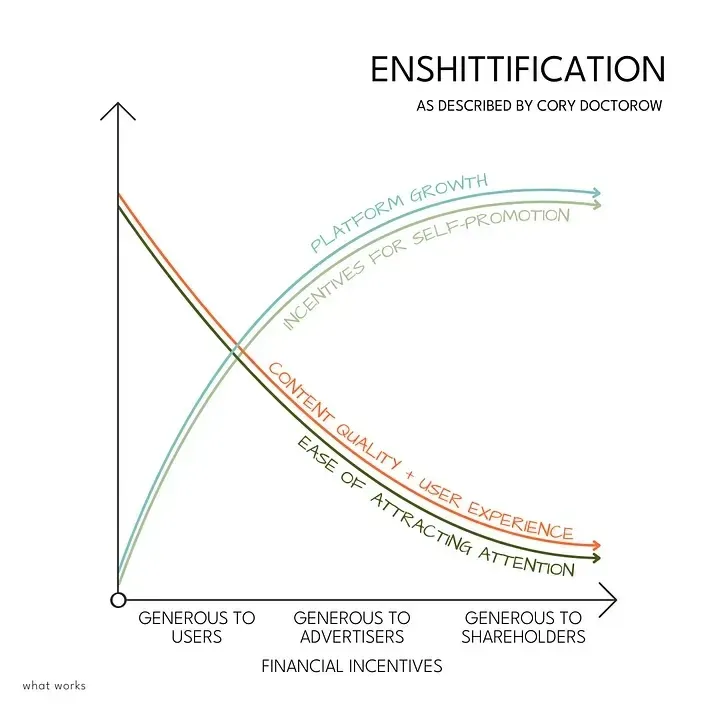

The enshittification of the internet is also the machinery that ushers in a new wave of surveillance capitalism under the guise of consumer convenience. It’s a familiar template: a company offers a new “feature” with the corresponding infrastructure, collects as much data as possible from the beginning, and makes opting out possible but difficult to enable. Oh, and you’d better be happy paying for that subscription.

By the time people understand what they’ve installed on their homes, the network is everywhere and your data is saved neatly on their servers. It’s too late.

Meta Already Paid for the Lesson

Meta should know better. They paid nearly $7 billion learning this hard lesson.

In 2019, Facebook settled with the FTC for $5 billion over allegations that included deceptive facial recognition practices. In 2021, it paid $650 million to Illinois consumers under that state’s Biometric Information Privacy Act, then deleted over a billion face templates and shut down its facial recognition system entirely. In 2024, it paid Texas $1.4 billion for the same category of violation.

Now, according to reporting by the New York Times citing an internal Meta document, the company is considering adding facial recognition to its smart glasses. (Sound familiar yet?) The document reportedly notes that Meta may launch the feature during what it characterized as a “dynamic political environment” where civil society organizations that would normally push back would have their resources focused elsewhere.

Read that again, out loud if you need to. This is not a company that got caught and changed course, realizing the error of its ways. This is a company that paid billions, waited for what it judged to be a favorable moment, and is moving forward anyway. The fines were simply a cost of doing business. The ambition to excel at surveillance is still there.

Your biometric data is not like a password; you can’t change it when it’s compromised. It is unique, permanent, and increasingly useful to anyone who wants to identify, locate, or track you. None of us has consented to having their face scanned by someone’s glasses while we’re outside our homes. But the problem around consent is not a technical challenge for Meta, because it’s a design choice to support their ambition.

And as of this writing, Meta has patented an AI technology that takes over your account when you die. What could go wrong?

The Surveillance You’re Already Paying For

But hey: you don’t need to wait for smart glasses to become the norm or for AI pendants from Apple to track you. The most pervasive surveillance of your professional and personal life is already running, and there’s a good chance you or your company is paying for it monthly.

Microsoft 365 is the standard for every industry, and it now ships with Copilot deeply embedded across Word, Excel, Outlook, Teams, and PowerPoint. To function, Copilot requires that your documents, emails, and meeting transcripts flow through Microsoft’s cloud infrastructure, where they are processed, indexed, and retained according to terms most users have never read.

That’s right: Your strategy decks, client briefs, unreleased campaign concepts, your salary discussions in Teams… all of it is training data for a system owned by a company whose AI division has a direct commercial interest in the patterns your work contains. (And it is already producing what can be a “bug” for consumers but a feature for Microsoft.)

Microsoft’s data retention and usage policies are not secret, but they are ridiculously long, frequently updated, and understood only by attorneys. Most organizations clicked through an enterprise agreement, enabled Copilot because it was included in the license tier they were already paying for, and now have a detailed record of their internal operations sitting in Microsoft’s cloud. It’s so convenient to just click “Accept” and move on.

The default settings in most enterprise deployments favor Microsoft’s data collection, not the customer’s privacy. Opting out requires IT resources, company policy decisions, and organizational fortitude that most small- to mid-size agencies simply don’t have. They need to keep working.

This is the surveillance capitalism model operating at its most efficient: not a product you chose and paid for, but a feature bundled into infrastructure which makes it increasingly difficult for you to leave, with data collection that is vaguely disclosed but to which you have not consciously consented because the alternative is rebuilding your entire operational stack. The institutional lock-in is the point, and that involves data collection. (Data portability is a whole different but worthwhile subject.)

Apple, for comparison, has built a genuinely different architecture: its AI processing happens on-device by default, and the company’s business model doesn’t depend on monetizing your content. (Not yet, anyway.)

That distinction matters. But Apple is also accelerating development of always-on camera wearables, including smart glasses targeting a 2027 launch and camera-equipped AirPods potentially arriving this year. (I don’t know why my earphones would need cameras, but…)

The entire industry is converging on ambient, always-on AI hardware, and the privacy implications of that convergence are being set now, in the defaults and the Terms of Service, with the full realization that few people are paying attention.

While it’s true that Apple may still be the most relatively “privacy-focused” consumer technology provider (see: “Privacy is a fundamental human right”), and most people will not take the time to learn how to install GrapheneOS on their mobile devices or Tails Linux on their laptops, the company is still gathering data through every means available.

Who Gets to Build the Future

The digital elephant in the room is that the AI boom isn’t just changing how we work, who gets access to our data, and what our many devices actually do (yes, your Amazon-owned Roomba is mapping your house and selling the data to third parties): This nascent phase of AI integration into our lives is reshaping who can afford to build digital devices themselves.

Phison CEO Pua Khein-Seng recently warned in an interview that the AI industry’s voracious demand for memory chips has created a crisis severe enough that many consumer electronics manufacturers will go bankrupt or exit product lines by the end of 2026. That is a dire warning.

Memory manufacturers are reportedly demanding three years of prepayment, and the shortage is estimated internally to run through 2030 or beyond. Samsung, SK Hynix, and Sandisk are reported to be planning to double NAND flash prices this year.

The consequence isn’t just that your next laptop will cost more or that you won’t be able to add memory to your kid’s gaming PC. It’s that the companies large enough to weather this (Apple, Amazon, Google, Meta) are the same companies building the surveillance layer. This consolidation in the hardware supply chain means that consolidation in the platforms that run on that hardware with the chips you can no longer buy will move faster as well. The economy will be left with the surveillance business models already in place as the only option.

And keep in mind that there is already talk of consumer-grade computers being offered in the very near future that are not yours to own: you will likely “rent” a computer in the cloud and work from a dumb terminal. Jeff Bezos is already on board, and you should be worried about that. He has the power and money to create whichever system will bring him more of both.

Those who are in control of these systems have a vested interest in making sure you do not own anything anymore. But they want to control your data.

The Part You Can Actually Control

Sure, the Ring cameras in your neighborhood and the Meta glasses being worn by a tech bro at Coachella may not be things you can actually avoid. But the level of surveillance on your own devices is something you can control with a simple mantra: Security is Never Convenient.

The browser is the most important single choice most people don’t think about carefully. Google Chrome was built to keep you logged in, synced, and identifiable across the web. That makes you the product being sold. When you use Chrome, you are feeding the same dangerous data ecosystem that Ring and Meta encourage. Even the FBI issued a warning.

But fear not: you have choices, and they are easy to implement. Firefox with the uBlock Origin extension blocks the tracking infrastructure at the network level: not just cookies, but the fingerprinting techniques companies use when cookies are blocked. And the EFF has a handy extension called Privacy Badger which works with all leading browsers to protect you.

Brave offers similar protection with less configuration required (but the CEO has a sketchy civil rights past. Caveat emptor.) These browsers offer the same if not better functionality: Brave is built on Chromium, which means you can use all of your Chrome extensions. Security is never convenient, but this is pretty easy.

And then there’s the Microsoft problem: bloated software with ridiculous subscription rates (and that whole issue with trying to place an image in Word). However, LibreOffice is a mature, fully capable office suite that handles Word, Excel, and PowerPoint formats natively, costs nothing, and doesn’t route your documents through a cloud AI system. (Full disclosure: I have been using it for two years with zero compatibility issues with Office files. The transition was smooth as silk.)

LibreOffice is a free (as in beer) and open source (as in freedom) software project with contributions from developers around the world. As with all free software, it benefits all of us to donate to the project.

For organizations where the Copilot integration is a genuine concern, such as law firms, agencies with NDAs, and anyone handling client data with confidentiality obligations, LibreOffice is not a compromise. It’s a cleaner tool for a specific class of work. And it just works.

You will hear the usual argument that your clients use Office, your vendors use Teams, your whole workflow is entangled. I get it. That’s a real concern. But the depth to which any organization is entangled in the Office ecosystem is worth examining.

The reason leaving Microsoft is hard is the same reason the data collection is so comprehensive: you’re inside a system specifically engineered so that leaving allegedly costs more than staying. (We are back to enshittification.) It doesn’t mean that you have to switch immediately; it means trying it out for a few days or a week, and deciding if it works for you. It also means deciding if your security is worth trading off for convenience.

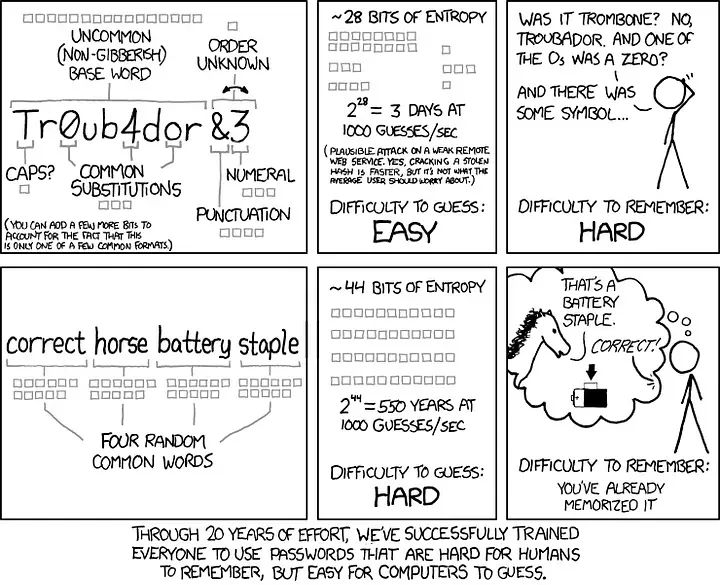

Another example is how Signal replaces SMS and standard calls with end-to-end encryption for conversations you’d prefer not to have indexed. Or how Bitwarden fixes consumers’ password reuse habit (still the most exploited single vulnerability) with an open-source password manager that has been independently audited and doesn’t require trusting a commercial vault with your master credentials.

None of these require suffering, wailing, or gnashing of teeth. Once you get used to the new and safer way, it will be just as easy. They only require patience and a willingness to step out of familiarity and convenience. The big commercial alternatives are engineered to feel easy because you overcome difficulty by conveniently using what everyone else does (plus paying more and sharing more data).

That asymmetry is a business decision, not a law of nature. You can overcome it. I believe in you.

Five Things Worth Doing This Week

- Switch your browser. Replace Chrome with Firefox plus the uBlock Origin extension, or Brave if you want less configuration and your favorite extensions from Chrome.

This is the highest-leverage single change you can make, because it breaks the cross-site identification layer that feeds the surveillance advertising ecosystem. So many words. You get it. - Use LibreOffice, especially for sensitive documents. You don’t have to abandon Microsoft 365 entirely, but strategy documents, client briefs, unreleased creative, and anything under NDA should not be routed through Copilot’s cloud processing. LibreOffice handles all Office formats natively, costs nothing (but please donate), and keeps your content off Microsoft’s servers. Trust me.

- Move sensitive conversations to Signal. You don’t have to move completely: we all like being the blue bubble in the group chat. But maybe use it for the ones where you’d be uncomfortable with the contents being subpoenaed, data-breached, or sold. Signal is free, widely used, and end-to-end encrypted by default.

- Use a password manager. For the love of all that is holy, stop using the same passwords. Bitwarden is open-source, free for personal use, and audited. If you’re reusing passwords across accounts (and most people are), this is the most urgent fix you can make. (You know who you are. Yeah, I’m talkin’ to you.)

- Audit your Ring settings. If you have a Ring device (it’s OK: we all get duped sometimes), open the app and check your Control Center. Search Party, Neighbors data sharing, and law enforcement request settings all turn on by default. Turn off what you did not choose knowingly.

The Longer View

All of this is a set of choices. Corporations have chosen to make you and your data the product. Engagement is the goal, because it generates patterns that are connected to you. But the vast majority of us accept the convenience without reading the terms. We click “Accept” and then go our merry ways.

The surveillance infrastructure continues being assembled right now in doorbell cameras, in social media glasses, in soon-to-be-released AI pendants worn around people’s necks. And it’s all being built with consumer dollars and consumer data.

The companies building it are making calculated bets that people will choose convenience over privacy every time (our economy is built on it), and that the political and regulatory environment will remain favorable long enough to entrench the infrastructure into societal norms before serious resistance emerges. I mean, try telling someone that they shouldn’t use Microsoft Office: they’ll look at you like you’ve gone absolutely mad.

The internal Meta document that described timing a massive invasion of privacy for a point when civil society would be distracted is not an outlier opinion. It’s a business strategy.

We all need to understand that the perceived inconvenience of alternatives is purposefully engineered propaganda, not an accidental occurrence. The choice isn’t between a convenient option and a worse one: it’s between an option built to extract value from you and one that isn’t. Simple as that.

Saying it that way won’t move everyone to change, but it might move enough people that the companies making these strategic bets start finding their calculations a little less reliable.

That would be inconvenient for them, which is precisely the point.

_____________________________________________________

Sources:

No One, Including Our Furry Friends, Will Be Safer in Ring’s Surveillance Nightmare, Electronic Frontier Foundation, Feb. 10. 2026

Many consumer electronics manufacturers ‘will go bankrupt or exit product lines’ by the end of 2026 due to the AI memory crisis, Phison CEO reportedly says. PC Gamer, Feb. 16, 2026

Apple Ramps Up Work on Glasses Pendant and Camera Airpods for AI Era, Bloomberg, Feb. 17, 2026

Several Billion Reasons for Facebook to Abandon Its Face Recognition Plans, Electronic Frontier Foundation, Feb. 13, 2026

Meta Plans to Add Facial Recognition Technology to Its Smart Glasses, The New York Times, Feb. 13. 2026

Social media needs (dumpster) fire exits, Cory Doctorow, Dec. 14, 2024

‘That’s not going to last’: Jeff Bezos believes AI will force you to rent your PC from the cloud, and the RAM crisis is accelerating it, Tom’s Guide, Jan. 16, 2026

FBI issues urgent warning to billions of Google Chrome users over dangerous hacking scam, Unilad, March 28, 2025